The Evolution of Locobuzz Sentiment Analysis & AI

The master plan

Artificial Intelligence is the future. But, still, few have the courage to invest in their R&D, have the patience to endure the setbacks, and trust the team’s work. Locobuzz‘s aim is to give the best AI to our clients, exceeding their expectations, easing their lives, and pushing the boundary of Social Media AI.

Like all great plans, we, too, have humble beginnings. Our AI journey started with Sentiment Analysis.

Like all great plans, we, too, have humble beginnings. Our AI journey started with Sentiment Analysis.

Data is the new gold

To train an AI, immense amounts of data are required. We call it Dataset. We initially started with a 7,000 dataset, trained the sentiment AI and our accuracy was 63.2%. It was quite apparent that more data was required. So, we searched externally and internally for quality data.

Locobuzz has a well-managed terabyte scaled database. Using this, we’re able to accumulate a large dataset, but that was not enough. We needed variety, hence, we went to the internet and salvaged every bit of data we could get our hands-on. Through the hardships, we were able to accumulate a 9.3 million dataset spread across 38 global languages.

Locobuzz has a well-managed terabyte scaled database. Using this, we’re able to accumulate a large dataset, but that was not enough. We needed variety, hence, we went to the internet and salvaged every bit of data we could get our hands-on. Through the hardships, we were able to accumulate a 9.3 million dataset spread across 38 global languages.

From crude oil to petrol

Raw data is akin to crude oil; very precious but of no significant value. Some major impurities with raw sentiment data are mentioned below.

At last, our efforts paid off. The Generic Preprocessor can process any kind of NLP-related data in more than 30 languages. It can resolve more than 50 issues in the data. This gives our NLP AI a significant boost in performance.

- ORM executives have contrasting perceptions of sentiment. Ex. “Nice” can be tagged as positive by one agent and neutral by another

- #LoveMyCountry is not understood by AI unless you split the word into “Love my country”

- The Thai language has no space between its words

At last, our efforts paid off. The Generic Preprocessor can process any kind of NLP-related data in more than 30 languages. It can resolve more than 50 issues in the data. This gives our NLP AI a significant boost in performance.

Making the smart kid

AI is akin to a child. At birth, it’s pretty much a simpleton but as it grows it gains experience & knowledge. Some gain experience faster compared to the rest, hence we call them ‘smart’.

For Sentiment, we were looking for a smart algorithm. After months of experimentation, refinement, and retrials we were able to make not just one but two algorithms. We started using these two algorithms in hybrid mode. Locobuzz now has a bleeding-edge Hybrid Sentiment AI that is fast to train and is very accurate. Compared to before, new AI gives 76.3% accuracy on the same 7,000 datasets.

For Sentiment, we were looking for a smart algorithm. After months of experimentation, refinement, and retrials we were able to make not just one but two algorithms. We started using these two algorithms in hybrid mode. Locobuzz now has a bleeding-edge Hybrid Sentiment AI that is fast to train and is very accurate. Compared to before, new AI gives 76.3% accuracy on the same 7,000 datasets.

A good teacher and a good school

Smart AI requires a customized training procedure. Our Sentiment Algorithm has 2.4 million parameters. It’s equivalent to a maths equation with 2.4 million parameters. When all the 2.4 million parameters are set to correct values, then and then only the sentiment gives the correct result. This requires a statically correct training procedure and experience of the data scientist.

To perfect the training procedure, we required a cluster of high computationally intensive Graphics Processors (aka good school) that can handle the computations of 2.4 million parameters. These processors are extremely costly and are paid by minutes of usage. So we had to find the best training procedure with the least amount of trials. It’s a daunting task and our team did it very well.

To perfect the training procedure, we required a cluster of high computationally intensive Graphics Processors (aka good school) that can handle the computations of 2.4 million parameters. These processors are extremely costly and are paid by minutes of usage. So we had to find the best training procedure with the least amount of trials. It’s a daunting task and our team did it very well.

Standing strong in the tsunami

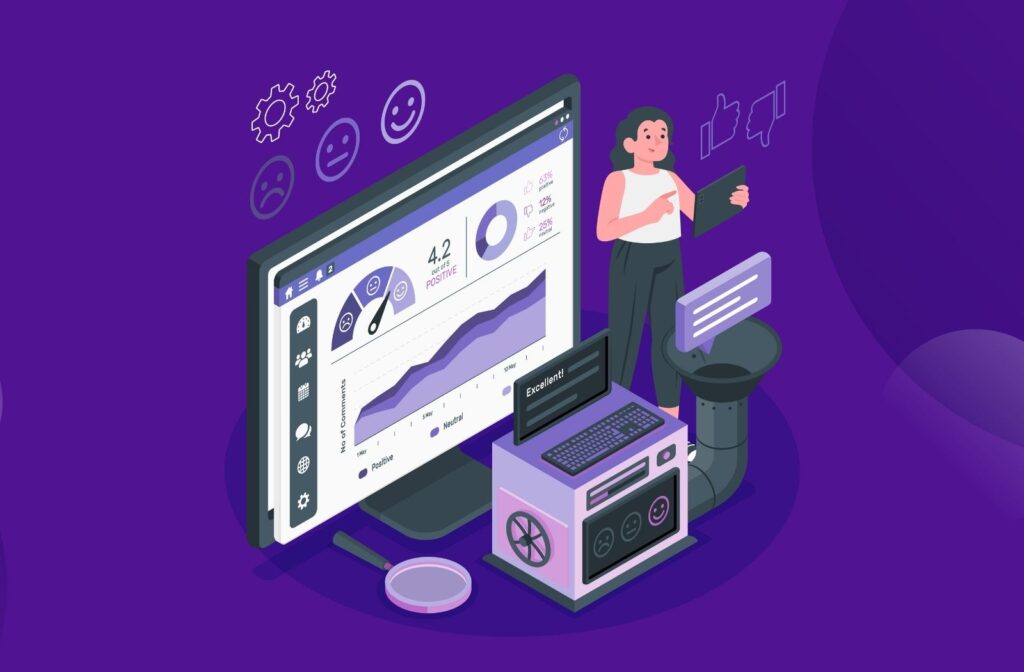

After months of hard work, we were able to pull the entire system together and created an AI which gives an accuracy of 94.3%!

Now, our challenge was the volume of sentiment requests. On a tsunami day, there are over 1500 sentiment requests per sec in about 30 different languages. We needed to create an API engine that can support such storms. Our team of data engineers created SentimentAPIEngine. This system supports multithreaded request handling and no-downtime updates. This allows it to function 24 hours/365 days without any downtime. As a result, we cater to our clients without any glitch.

Now, our challenge was the volume of sentiment requests. On a tsunami day, there are over 1500 sentiment requests per sec in about 30 different languages. We needed to create an API engine that can support such storms. Our team of data engineers created SentimentAPIEngine. This system supports multithreaded request handling and no-downtime updates. This allows it to function 24 hours/365 days without any downtime. As a result, we cater to our clients without any glitch.

Measure of success

For every AI product, we follow a zero-tolerance policy. We have a log of all the complaints we receive for every client we cater to. The goal of the data science team is zero complaints. This is also the measure of our success.

As we receive a complaint, it goes through the verification and correction process. The model is updated every week and there are continuous revisions. We realized the value of what we’re able to deliver for our clients and that was all the motivation. Today, we have state-of-art sentiment analysis with great accuracy, and we are still pushing our limits to achieve zero tolerance.

As we receive a complaint, it goes through the verification and correction process. The model is updated every week and there are continuous revisions. We realized the value of what we’re able to deliver for our clients and that was all the motivation. Today, we have state-of-art sentiment analysis with great accuracy, and we are still pushing our limits to achieve zero tolerance.

A basket full of ai

Locobuzz has heavily invested in Computer Vision, Clustering technologies, and recommendation engines. Our key projects are Object Detection, Obscure Optical Character Detection systems, Smart Reply System, Smart Compose System, Contextual Word Cloud, etc.

Similar to our sentiment AI, we have carefully worked on each of these systems and tried our very best to make them zero complaints. These AI required a lot of effort to make them generalized for every client but our team’s ingenuity and creative ideas made it possible. We are very proud to say that each of these technologies is setting new industry standards.

Similar to our sentiment AI, we have carefully worked on each of these systems and tried our very best to make them zero complaints. These AI required a lot of effort to make them generalized for every client but our team’s ingenuity and creative ideas made it possible. We are very proud to say that each of these technologies is setting new industry standards.

Skip to content

Skip to content